Researchers at MIT and the MIT-IBM Watson AI Lab are exploring the use of spatial acoustic information to help machines better envision their environments. They developed a machine-learning model that can capture how any sound in a room will propagate through the space, enabling the model to simulate what a listener would hear at different locations.

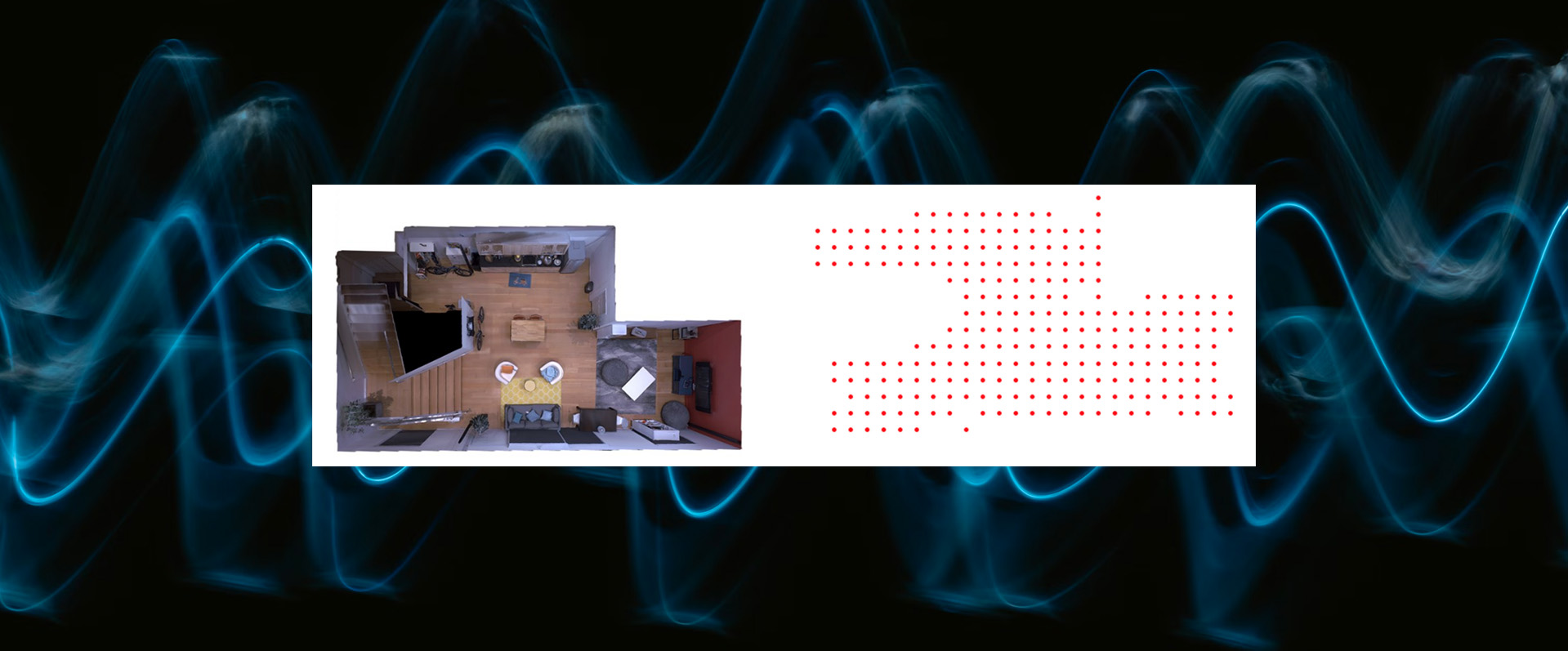

By accurately modeling the acoustics of a scene, the system can learn the underlying 3D geometry of a room from sound recordings. The researchers can use the acoustic information their system captures to build accurate visual renderings of a room, similarly to how humans use sound when estimating the properties of their physical environment.

In addition to its potential applications in virtual and augmented reality, this technique could help artificial-intelligence agents develop better understandings of the world around them. For instance, by modeling the acoustic properties of the sound in its environment, an underwater exploration robot could sense things that are farther away than it could with vision alone, says Yilun Du, a grad student in the Department of Electrical Engineering and Computer Science (EECS) and co-author of a paper describing the model.

This research is supported, in part, by the MIT-IBM Watson AI Lab and the Tianqiao and Chrissy Chen Institute.